5 Game-Changing AI Workflows You Should Use Instead of Buying More Tools

Discover 5 simple yet highly effective AI workflows using popular platforms to boost productivity wi…

Discover why Vietnamese SMEs spend 50–200 million on AI with no ROI. Our framework offers insights without a pitch deck. Written by Benocode Team from real cases.

"We signed a 50 million AI package last year. Now, we can only use the email summarization feature." — an intro from a recent discovery call with the founder of a trading company with 18 employees in District 7.

Not an isolated case. An internal survey by Benocode Team across 30+ consultations for Vietnamese SMEs in the last 12 months:nearly 60% have spent 30–200 million on at least one AI initiative — licensing tools, hiring freelancers, buying courses for the team — andstruggle to measure clear ROI Many describe the feeling of "knowing AI is necessary but wasting money aimlessly."

This isn’t an "AI is the future" article. This is about5 specific reasonsOur team sees repeated across almost every failed case — andhow to identify early + fixbefore wasting more money.

Who should read this: founders/CEOs/COOs of SMEs with 5–50 employees that have spent on AI and are questioning its effectiveness. Or those considering the next investment round and wanting to avoid mistakes.Skip this if: you are looking for an "What is AI" article or a list of 100 tools — this article assumes you've already encountered AI.

This is themost common reasonour team encounters. A founder reads about ChatGPT Enterprise or Jasper or Make.com, gets excited, and buys licenses for the whole team. Three months later, 2 out of 15 are regular users, and the rest logged in once and forgot.

Why this happens:AI tools are marketed as a "swiss army knife" — solving all pain points. But the actual pain in the business remains undefined. The team doesn't know how to use it specifically and thus abandons it.

Easy-to-recognize symptoms:

How to fix it: Reverse it. Before purchasing a tool, write down on one piece of paper:

If you can't get through these 3 lines → don't buy. Any tool will just sit there.

This reason often accompanies Reason 1 but is worth separating due to a different fix.

Typical scenario: a company buys HubSpot, Zoho, or an AI content generation tool. After setting up the account, no one pushes data in. — as in order to use it, you must import a customer list, connect Meta Ads/Google Ads, sync Google Sheets, write prompts in line with the brand tone. This is not a "one-click" operation.

Result: the tool is empty, the AI output is generic, and the team loses faith after 2 weeks.

How much effort is really needed for a proper setup:

Task | Time Required | Skills Required |

|---|---|---|

Audit existing data (clean or dirty) | 4–8 hours | Intermediate Excel/Sheets |

Import + normalize schema | 8–16 hours | API/no-code |

Connect ad platform / e-commerce | 4–12 hours | OAuth + API documentation |

Write prompt + brand voice template | 4–8 hours | Marketing + LLM |

Train team + document processes | 4–8 hours | PM/ops |

Total: 24–52 hours. That translates to 1 part-time person over 1–2 weeksor 1 full-time internal developer for 1 week..

How to fix: Before signing up for the next tool, ask the provider/agency directly: "Will you handle data integration + prompt templates, or just selling licenses?" — if only selling licenses → calculate your team's hours spent and add it to TCO.

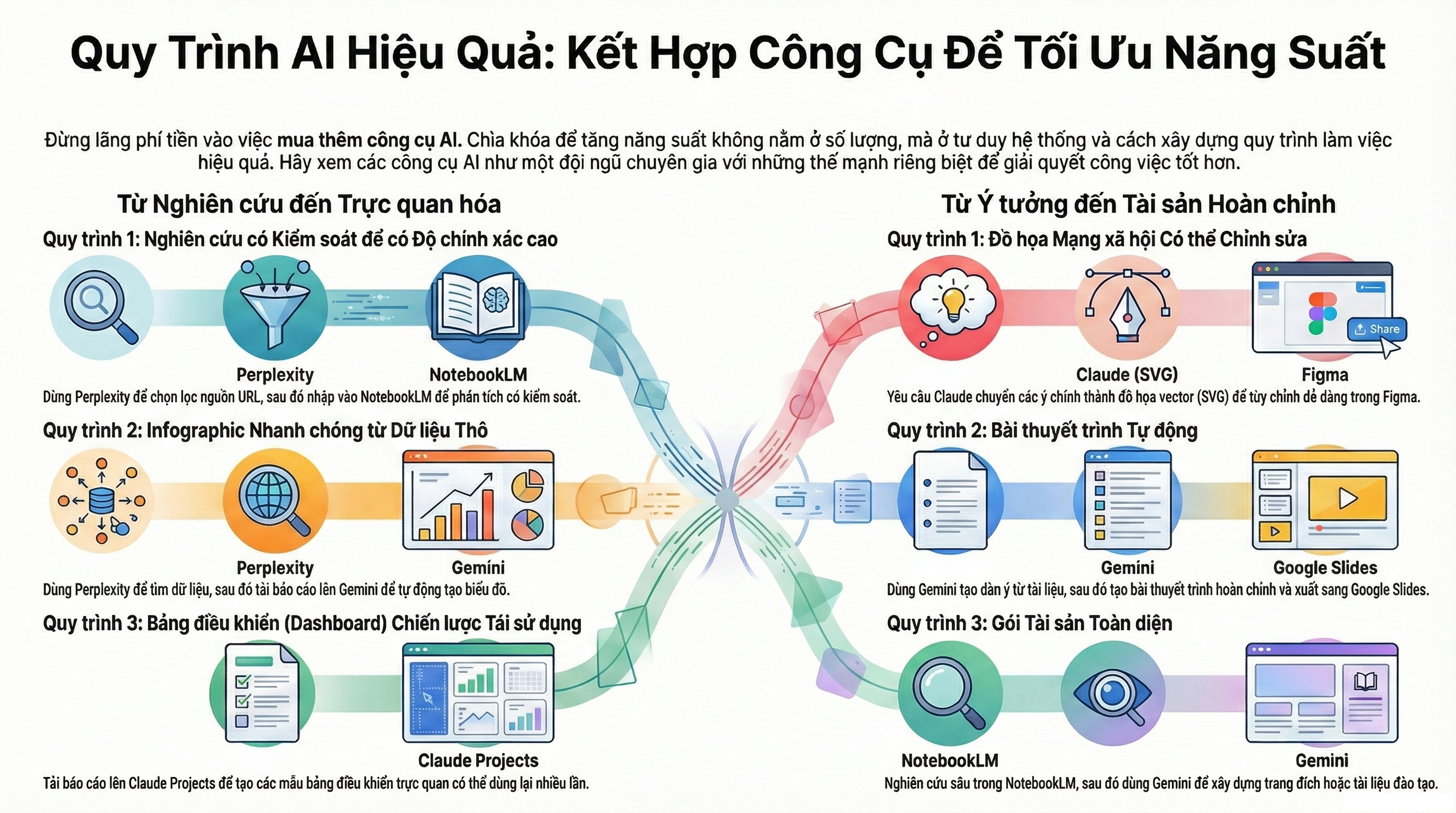

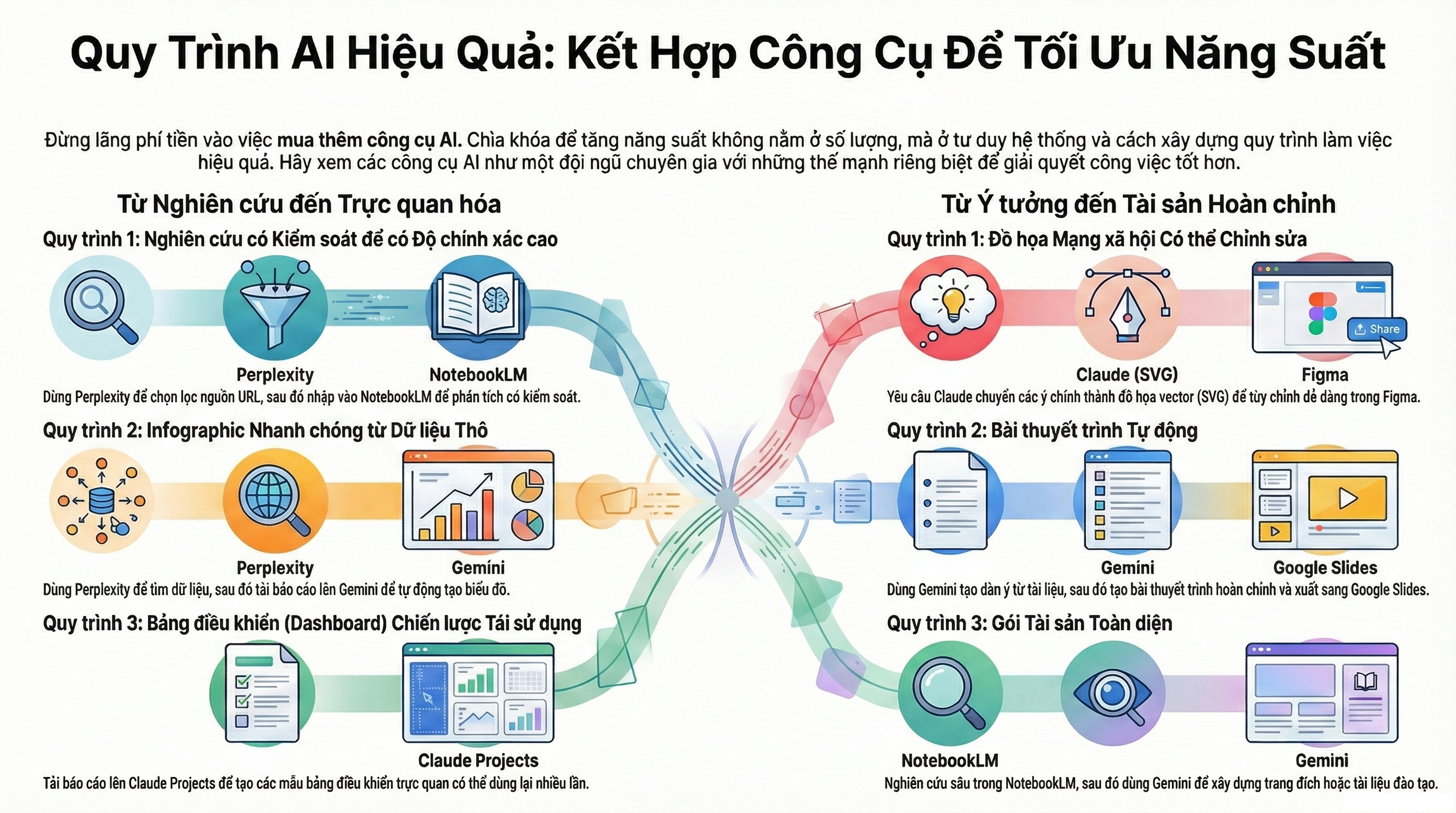

Another perspective: Our team once wrote an article 5 AI processes changing the game. — most processes that truly generate ROI require dedicated data flows, not standalone prompts.

ChatGPT is a doorway — not the whole house. Many SMEs think "AI = ChatGPT", subscribe to Pro/Team, then stop. When they need repetitive workflows — copying orders from Shopee to Sheets, qualifying leads, sending daily reports — they still operate manually every day, pasting prompts into ChatGPT one by one.

Why they fall short:ChatGPT on the web doesn't retain long-term context, doesn't self-trigger on schedule, and cannot connect to CRM/Sheet/Slack automatically. Each use requires manual copy-pasting. Saves 30% time per task, without the need to delete the entire task.

Practical Stack for SMEsusually consists of 3 layers:

Lớp orchestration → n8n / Make.com (lập trình workflow no-code)

↓

Lớp LLM → Claude API / OpenAI API (pay-per-use, rẻ hơn 5–10×)

↓

Lớp data → Google Sheet / Postgres / CRM hiện cóThese 3 layers communicate with each other, running 24/7 without needing anyone to power them on. Lead nurture workflows, FAQ chatbots, daily reporting — all fit into this pattern.

Fix:Pick the most time-consuming repetitive process → build a workflow stack instead of using ChatGPT. Refer to 5 n8n workflows for Vietnamese SMEsor a guide to building an AI agent with n8n + Claude.

Typical costs: 25–60 million/workflow if hiring production-grade, or 1–2 weeks internal development + ~$10–30/month API. Much cheaper than all-in-one tool licenses and you own the codenot locked into a vendor.

"I think the tool is fine" — the most common response we hear after 90 days of implementation. When further asked, "fine in what way?" → "the team feels it's faster."

How much faster? How many actual hours saved? Cost compared to baseline? No one measures it.When it's time to renew the license, the founder must decide based on feelings → a 50/50 cut or keep it arbitrarily.

Symptoms:

Fix:Before deploying the tool/agent, record 3 columns in a Sheet:

Metric | Baseline (before AI) | Target after 90 days |

|---|---|---|

Hours the team spends on task X | 20 hours/week | 8 hours/week |

Cost / qualified lead | 80,000 VND | 40,000 VND |

Time-to-response on Messenger | 4 hours | 15 minutes |

3 lines. Be specific with numbers. If after 90 days it's not met → the tool is not suitable for your case (not that "AI in general doesn't work"). Discard that tool without feeling guilty.

During the 90-day AI Performance auditmy team starts every engagement with a 60-minute session establish baseline + target metricWithout this step, remaining engagement is just vibes.

This reason is sensitive because freelancers are 3-5 times cheaper than agencies. But AI implementation for production is very different from building a static landing page.

Actual gap:

Factor | Freelancer ($) | Company with process ($$$) |

|---|---|---|

Prototyping capability | ✅ Fast | ✅ Fast |

Eval framework (measuring AI accuracy) | ❌ Rare | ✅ Default |

Cost ceiling + alert | ❌ | ✅ |

Monitoring + retry logic | ⚠️ Ad-hoc | ✅ Built-in |

Ownership transfer (when leaving) | ⚠️ Includes their ‘secret sauce’ | ✅ Documentation, repo, runbook |

Long-term maintenance | ❌ Must find new person | ✅ Contract retainer |

Actual consequences my team sees: SME hired a freelancer to build an AI agent for 30 million. The agent ran fine for 2 months. In month 3, prompt drift occurred, in month 4 Meta API changed → broke. The freelancer went on to another project. No one could read the code (no docs, variable naming temp_var_2. Total rebuild cost: 80 million — higher than hiring a company from the start.

When a freelancer is right: prototype POC <2 weeks, clear scope, no need for production. If it works → port to a company for the production version.

When a company is right: workflow goes into actual production, with customers/team using it daily, budget for AI errors = significant costs (leads dropped, customer replies wrong, ad budget runaway).

How to fix: Before signing with a freelancer, ask 3 questions:

Hesitant answer → POC OK, but don't deploy to production.

Compile the 5 reasons into a checklist — print/screenshot/save in Notion, open every time considering a new tool/freelancer:

[ ] 1. Mình đã viết ra pain cụ thể + output mong muốn + owner chưa?

[ ] 2. Ai chịu trách nhiệm setup data + prompt + train team?

(Tính giờ + cost người đó vào TCO chưa?)

[ ] 3. Workflow này lặp đi lặp lại không?

Nếu có → cần stack n8n/Make + LLM API, không chỉ ChatGPT.

[ ] 4. Có baseline metric + target 90 ngày bằng số chưa?

[ ] 5. Đối tác triển khai (freelancer/company) có ship monitoring +

tài liệu + maintenance plan không?3 checks or fewer → do not invest this roundRevisit unclear statements.

5 checks → proceed.

If you've spent money on AI and doubt the ROI, 30-minute discovery call with my team:

This is part of the AI Performance Audit + 90-day Roadmap from Benocode Team. Free discovery call. If we continue collaborating, the main engagement starts from 12 million.

I've already spent 100 million, can it be salvaged or does it need a complete rebuild?Depends. ~70% of cases our team finds salvageable — just need to fix Reason 2 (data integration) and Reason 4 (measuring ROI). Purchased tools don't necessarily need to be discarded. Complete rebuild is only needed if the tool fundamentally mismatches the business model (e.g., B2B SaaS buying tools for B2C use case).

Should an SME with 8 employees use AI, or is it too early?Yes. But don't start with a ChatGPT Enterprise license for the whole team. Start with 1 repetitive process that consumes the most hours each week — automate that first, measure ROI in 60 days, then scale further.

Is ChatGPT Plus $20/month sufficient?Sufficient for individual use or ad-hoc tasks. Not enough for workflows of 5+ people due to lack of API access (Plus/Team plan needed), no audit log, cannot integrate properly into n8n/Make. When the team needs to use AI daily, move to Claude API or directly to OpenAI API.

Do we need internal developers or can we outsource everything?At least 1 internal "tech-savvy" person — no need for specialized coding, but enough understanding of workflows to maintain + escalate. If there's zero internal capability → must have a maintenance retainer with an agency, not a one-off project.

Will the discovery call have a sales pitch?I do not close deals on discovery calls. If it's a fit, I'll send a proposal after 1-2 days. If it's not a fit, I'll clearly say "this case should be done internally" or refer to a more suitable agency.

How long to see ROI after fixing the 5 reasons above?Simple repetitive processes (lead capture, daily reports): 30-45 days. AI agent customer support: 60-90 days (due to need to tune prompts + evaluations). Complex workflows across multiple systems: 90-120 days.

The 5 reasons above are not exhaustive — there are other layers (org change management, vendor lock-in, security/compliance) but that’s a matter for after you've navigated these 5.

If you want:

Both options are cheaper than continuing to waste another 50 million without knowing where it goes.

Article by the Benocode Team. Data compiled from 30+ AI consultations for Vietnamese SMEs 2024–2026. Updated: 2026-05-09.

Discover 5 simple yet highly effective AI workflows using popular platforms to boost productivity wi…

Discover how small businesses can leverage AI technology to compete effectively, optimize operations…

5 actionable n8n workflows for Vietnamese SMEs: lead capture, multi-channel orders, FAQ chatbot, ad …